TL;DR: Before layering on AI tools, nail the foundations: clean crawlability, structured data, logical site architecture, and content that answers real questions.

Before you let AI touch your SEO, get your foundations right. We learned this the hard way.

When we first integrated AI-assisted internal linking into SEOJuice, we tested it on about 40 customer sites in a controlled rollout. The results split cleanly into two groups: sites with solid architecture saw immediate, measurable improvements in link equity distribution. Sites with structural problems? The AI made things worse — it confidently auto-linked to orphaned pages, created loops between thin content, and amplified every existing mess at machine speed. (One site ended up with 200+ new internal links pointing to pages that were canonicalized to other URLs. It took a week to untangle.)

That experience crystalized something I now consider a law of AI-assisted SEO: large language models don't improvise well with broken inputs. They follow patterns, surface structure, and amplify what's already there — for better or worse. If your foundation is solid, AI is a force multiplier. If your foundation is broken, AI is a mess multiplier.

This article answers a practical question: What elements are foundational for SEO with AI? Not in theory. In execution — the kind you can review in a CMS, plug into an audit, and use to build systems that don't collapse under scale.

AI tools can analyze content, suggest links, summarize intent, and generate outlines. None of that works if the underlying structure of your site is unreadable. I've audited sites where the AI content generation was genuinely good — well-written, on-topic, keyword-aware — but the articles were published six clicks deep in a broken hierarchy with no internal links pointing to them. Google never found them. The AI did its job; the architecture failed.

A disorganized site hierarchy blocks indexing, wastes crawl budget, and confuses internal link logic. This is where I see the most wasted AI investment — teams spending thousands on AI content workflows while their sitemap includes 300 pages that Google hasn't crawled in six months.

Logical URL hierarchy

Paths should reflect real structure. /services/seo/technical is crawlable and interpretable. /page123?ref=top is neither. We've seen URL structure changes alone improve crawl coverage by 30-40% on sites with deeply nested content.

Consistent internal linking

Pages need context from other pages. If your cornerstone content has three backlinks from irrelevant pages, AI tools won't recognize its importance — and neither will search engines. This is exactly the problem our auto-linking feature solves, but it can only work if the pages themselves are worth linking to.

No orphaned pages

Pages that exist without inbound links are effectively invisible. Automation won't save them. Our crawl audit flags orphaned pages specifically because they're the most common reason AI-generated content underperforms — it gets published and then sits in a black hole.

De-duped content paths

Canonicalization and redirect logic need to be sorted before you scale anything. AI tools don't know which version of a page is primary unless the architecture makes it explicit.

Clean navigation and sitemap

Menus and XML sitemaps should reflect real priorities, not every page ever published. AI crawling relies on signal strength, not volume.

Structure isn't glamorous. But without it, every AI-generated blog post, every auto-inserted link, and every suggested cluster sits on unstable ground. I think of it like pouring a concrete foundation before framing a house — nobody photographs the foundation, but everything above it depends on getting it right.

AI tools rely on clear signals — names, terms, labels, structure — to figure out what your content is about and how it connects. This seems obvious until you actually audit a real site.

One of our customers (a B2B SaaS company) called their product "Growth Accelerator" on their blog, "Scale Platform" on their homepage, and "Startup Toolkit" on their product page. Their AI-generated content briefs were incoherent because the underlying entity was undefined. When we standardized the naming across 40+ pages, their topical authority scores improved measurably within two months — not because the content changed, but because the signal became interpretable.

Inconsistent entities break semantic understanding and confuse both LLMs and search engines. Automation can't fix this. It needs something coherent to work from.

| Entity Type | Common Problems | Fix |

|---|---|---|

| Product/Service Names | Variations across blog, product page, and social posts | Create a controlled vocabulary and enforce it across all assets |

| Company Name | Abbreviated, stylized, or inconsistent brand mentions | Lock usage: always "SEOJuice," never "SJ," "SEO Juice," etc. |

| People / Team Members | First name only, role missing, inconsistent job titles | Standardize titles + names in bios, schema, bylines |

| Industries Served | Vague verticals like "tech," "B2B," or "online services" | Use specific language: "direct-to-consumer ecom," "SaaS email tools" |

| Feature Naming | Internal nicknames leaking into blog posts or sales decks | Sync naming in UI, docs, marketing, and structured data |

Run a site-wide entity consistency audit — Pull every instance of key entity names and map inconsistencies. Fix them at both the template and content levels. (We built a feature in SEOJuice that flags entity inconsistencies during crawl analysis specifically because this problem is so pervasive.)

Use structured data for reinforcement — Add schema to product pages, team bios, and org-level info. AI models often rely on schema to resolve meaning when page content is ambiguous.

Map internal linking to consistent anchor text — If a product is linked 20 times with 15 different anchor text variations, AI tools dilute the signal. Pick one canonical anchor and use it.

Document naming conventions — Keep a glossary of approved terms. Share it with anyone creating or prompting content. This sounds bureaucratic until you see the chaos that results from not doing it.

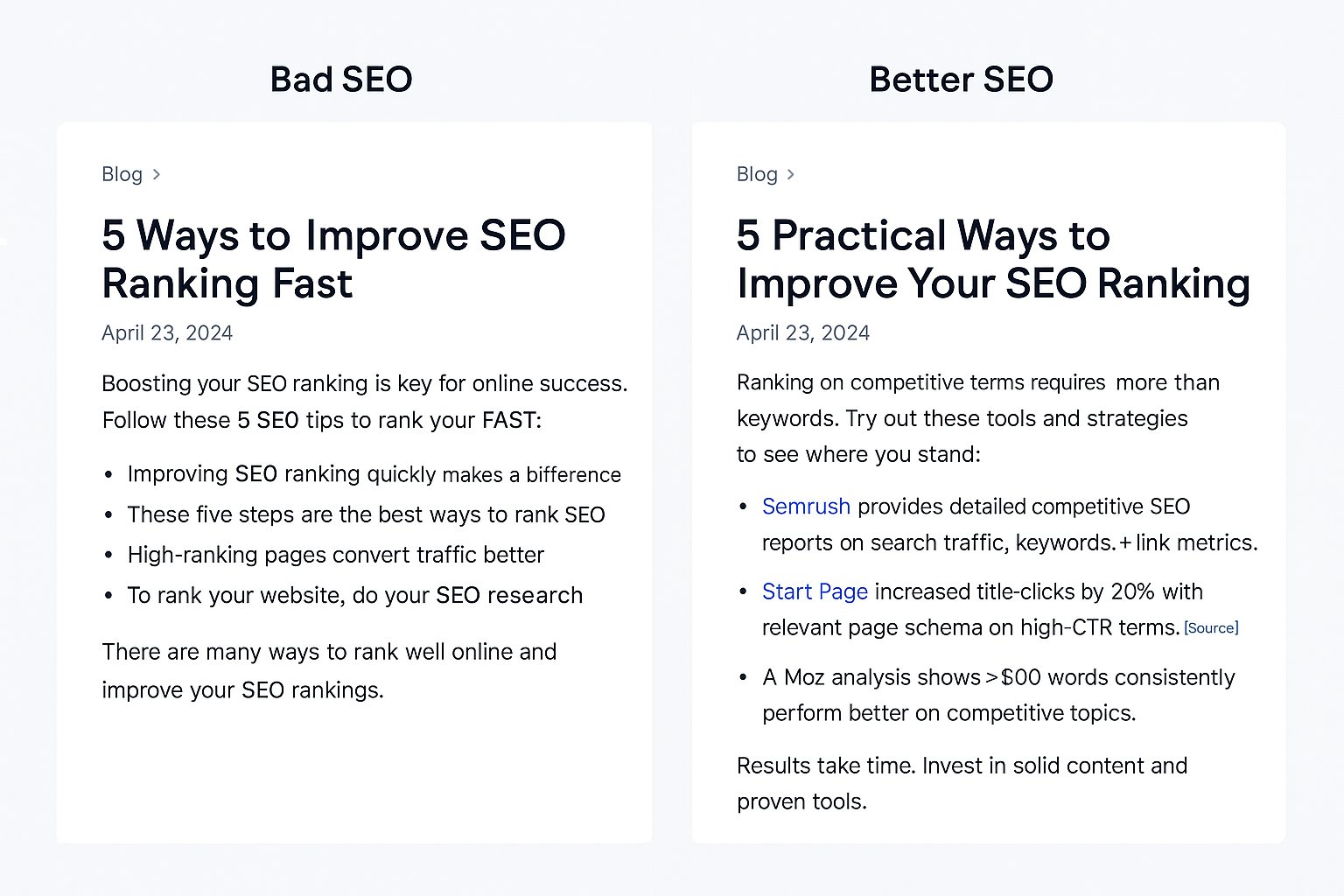

Plenty of pages are technically optimized — meta tags filled, H1s aligned, links added — but offer nothing a language model can reuse or quote. They're SEO-shaped containers with no actual content inside. I call these "checkbox pages" because they exist to tick off an SEO checklist, not to serve a reader or an AI.

If the content doesn't include specific, verifiable, structured information, LLMs will treat it like noise. These tools don't score based on formatting — they process based on meaning. A page that says "many companies see results" contributes nothing. A page that says "Three SaaS teams reported a 2x increase in trial signups within 30 days of implementing schema markup" gives both humans and AI something to work with.

| Element | Why It Matters | Real Example |

|---|---|---|

| Named entities | Clarifies what the page is about | "SEOJuice," "Google Search Console," "SaaS onboarding" |

| Quantifiable data | Helps models assess specificity and relevance | "42% reduction in churn over 90 days" |

| Source attribution | Supports factual credibility | "Data from a 2023 Nielsen study" |

| Explicit outcomes | Makes content usable in summaries or AI answers | "Increased lead conversion by 31% after schema implementation" |

| Modular structure | Allows AI tools to extract answers, definitions, or examples | Lists, FAQs, short summaries, structured callouts |

Don't just say "Our tool improves visibility." Say "Our crawl audit feature flagged 230 broken links on a 500-page ecommerce site, and fixing them recovered 12% of lost organic traffic within 6 weeks." That's a data-rich claim that an AI can quote, a journalist can reference, and a prospect can evaluate.

Most sites treat schema as an afterthought — a plugin default with no customization. That leaves significant value on the table, and I see this constantly in our audits.

The most common mistake: using BlogPosting schema on every page regardless of content type. Your pricing page should use Product schema. Your help center guide should use HowTo. Your team page should use Person and Organization. When schema matches content type and intent — not just template defaults — it adds structure that machines use to validate and resurface your content.

| Schema Type | Best Used For | Why It Matters |

|---|---|---|

Organization |

About pages, contact pages, site-wide identity | Anchors brand entity in Knowledge Graph |

Product |

Feature pages, software listings | Helps tools understand pricing, specs, and benefits |

FAQPage |

Q&A sections, bottom-of-funnel pages | Extracts direct answers for AI summaries or SGE displays |

HowTo |

Step-based guides | Enables structured walkthroughs in SERPs and LLM summaries |

Article + BlogPosting |

Editorial content | Flags publish date, author, and content body type |

Review + Rating |

Product/service reviews, testimonials | Adds trust indicators and structured scoring |

BreadcrumbList |

Any page with hierarchy or depth | Improves crawlability, reinforces structure |

We built a schema markup generator as a free tool on SEOJuice specifically because we kept seeing the same mistake: sites using plugin-generated default schema that didn't match their actual content. Validate with both Google's Rich Results Test and the Schema.org validator — each catches different issues.

Here's something I didn't appreciate until we started building AI-powered features: the quality of AI output is directly proportional to the quality of your source material. If product names, feature details, and positioning are scattered across blog posts, pitch decks, and outdated PDFs, there's no reliable signal for the AI to work from.

When we prompt our own AI systems for content briefs, the output quality improved dramatically once we centralized our source-of-truth content into structured, indexable pages. The same principle applies to any site that wants AI tools — whether internal ones or external ones like ChatGPT — to represent them accurately.

| Element | Function |

|---|---|

| Product overview page | One canonical source per product with specs, features, use cases |

| Glossary of terms | Defines internal language, industry terms, feature names |

| Founders/team bios | Consistent structure for name, title, company role |

| Pricing structure page | Transparent tiers, feature access, and value statements |

| Feature changelog | Time-stamped updates for context and recency |

| Central FAQ / knowledge base | Answers to recurring questions in structured format |

Create these as public, crawlable pages — not gated PDFs. Structure them with schema and internal links. Keep the language literal (skip taglines — AI tools do not interpret slogans). And then route all AI-assisted workflows through this base layer. When structured correctly, this knowledge layer becomes the source of truth for your content, your team, and every AI model that touches your site.

AI-driven SEO works best when content is treated less like essays and more like building blocks — self-contained, reusable, structured pieces that can serve multiple purposes across blog posts, landing pages, chatbot answers, and AI-generated snippets.

| Block Type | Where It's Reused | Example |

|---|---|---|

| Short definitions | Intros, glossary, FAQ, chatbots | "Technical SEO involves optimizing crawl paths, indexability, and site structure." |

| Value statements | Product pages, feature lists, social copy | "SEOJuice automates internal linking using real URL authority data." |

| Mini case stats | Blog content, AI briefs, social posts | "Cut time-to-publish by 58% after shifting to AI-assisted briefs." |

| Step-by-step guides | How-to pages, support content, LLM output | "1. Run an audit. 2. Identify orphan pages. 3. Create internal links..." |

| Snippets and summaries | Featured answers, meta descriptions, cards | "This guide explains how to prepare your site for scalable AI-based SEO." |

The practical advice: write in short, extractable segments. Every paragraph should make sense in isolation. Avoid soft intros and narrative padding — no "Let's dive in" or "In today's fast-paced world." Just the point. (I realize the irony of saying this in an article that's now several thousand words, but each section here is designed to stand alone.)

AI tools can generate, cluster, and suggest — but they can't tell you what worked without tracking data. Without feedback loops, automation produces more output with no direction. You're guessing faster, not improving.

| Metric | Purpose | Why It Matters |

|---|---|---|

| Organic CTR | Measures headline + meta performance | Feeds prompt optimization and meta refinements |

| Scroll depth | Indicates content usefulness | Flags weak intros or poor modular structure |

| Time on page (by template) | Assesses layout effectiveness | Informs future templates, not just topics |

| Conversion per page | Connects content to business outcomes | Ties AI briefs to real value |

| Internal link flow | Tracks how traffic moves through suggested links | Helps retrain AI models that cluster or auto-link content |

| Branded vs. non-branded queries | Separates awareness from intent traffic | Improves targeting for top vs. bottom funnel automation |

The key insight from running our own AI-assisted content pipeline: loop insights back into your prompt workflows. High-performing intros? Feed them into the next AI-generated brief as examples. Low dwell time on a content module? Flag that format for revision. Track by page cluster, not individual posts — the patterns only emerge at the cluster level.

If the site is slow, the structure is broken, or the content says nothing useful, AI won't hide it. It will help you scale those problems faster. I've seen this firsthand — that early rollout disaster I mentioned taught us more about foundation requirements than any amount of planning would have.

What elements are foundational for SEO with AI? The ones that remove ambiguity, clarify intent, and connect data to action:

No AI tool replaces strategy. But once the foundation is in place, it becomes a genuine force multiplier. Workflows get faster. Briefs get sharper. Optimizations move from gut instinct to systematized logic.

Get the structure right first. Then scale with AI. Not before.

Clear site architecture, consistent entity naming, structured data (schema), content modules, and trackable performance signals. AI tools need clean inputs and verifiable structure to produce useful outputs.

No. AI can audit and flag, but it doesn't patch broken redirects, flatten URLs, or clean crawl paths. You need a functional technical base before using AI for content or internal linking.

Schema defines what a page is about, who created it, and how it should be interpreted. Without it, content may be skipped or misclassified by both search engines and language models.

Short, standalone modules — definitions, stat blocks, how-to steps, FAQs. These formats can be reused, quoted, or summarized by both AI tools and humans.

Yes. A centralized, public, indexable knowledge layer ensures consistent product names, descriptions, and outcomes. It improves both internal AI prompting and external AI visibility.

Scroll depth, conversions, CTR, internal link behavior, and outcome-based tagging. This data improves AI-generated briefs and flags which content formats actually work.

Start with support — briefs, outlines, link suggestions, repurposing. Full content generation only works when you already have a solid voice, format, and fact base to train against.

More noise, buried best pages, and maintenance overhead. Quantity without structure tanks relevance and authority fast — we've seen this happen to customer sites that scaled AI content before fixing their foundations.

Train against your knowledge layer: approved definitions, key phrases, case stats, and value props. Pull from structured source material, not your latest social post.

Yes, but prioritize. Start with money pages, highest-traffic posts, and anything targeted by AI-powered SERPs. Add structure, clarify entities, insert schema, and track outcomes.

no credit card required